|

MSU Quality Measurement Tool: Metrics information. Compression project > > Video Area Home. Metrics Info. PSNRThis metric, which is used often in practice, called peak- to- peak signal- to- noise ratio — PSNR.. Max. Err – maximum possible absolute value of color components difference, w – video width. Generally, this metric is equivalent to Mean Square Error, but it is more convenient. It has the same disadvantages as the MSE metric. Download Image Psnr Tool at Image Informer: EPSON PRINT Image Framer Tool, 36-image converter, Html To Image. Psnr Calculation In Matlab Codes and Scripts Downloads Free. Sometimes in MATLAB, a programmer might want to write functions. In MSU VQMT you can calculate PSNR for all YUV and RGB components and for L component of LUV color space. In MSU VQMT there are four PSNR implementations. Qpsnr A quick PSNR/SSIM analyzer for Linux. Then I decided to write (in C++ naturally) my own tool, relying on libavcodec to get the frames of video streams. Home » Source Code » PSNR calculation of an image using Matlab. It can be used to calculate the PSNR of an original image and distorted or segmented or. Hi, I did a quick comparison of the SSIM calculations using vmaf However, this way of calculation. If color depth is simply increased from 8 to 1. They would not change because they use upper boundary of color difference. Max. Err. The upper boundary is 2. This approach is less correct, but it is used often because it is fast. SDH calculation tools. In this section, you can find evaluation and calculation tools dedicated to solar district heating allowing opportunity and feasibility studies.

It means that if, for example. R- RGB PSNR for 1. YUV file, then 2. Max. Err. The correct way to calculate average PSNR for a.

MSE for all frames (average MSE is arithmetic mean of the MSE values for. PSNR using ordinary equation for PSNR. This way of average PSNR calculation is used in . However, sometimes it is needed to take. PSNR values. This metric is used for testing codecs and filters. Delta. The value of this metric is the mean difference of the color components in the correspondent points of image. This metric is used for testing codecs and filters. Note: Red color Xij > Yij, green color Xij < Yij. MSU Blurring Metric. This metric allows you to compare power of blurring of two images.

If value of the metric for first picture is greater than for second, it means that second picture is more blurred than first. Source. Processed.

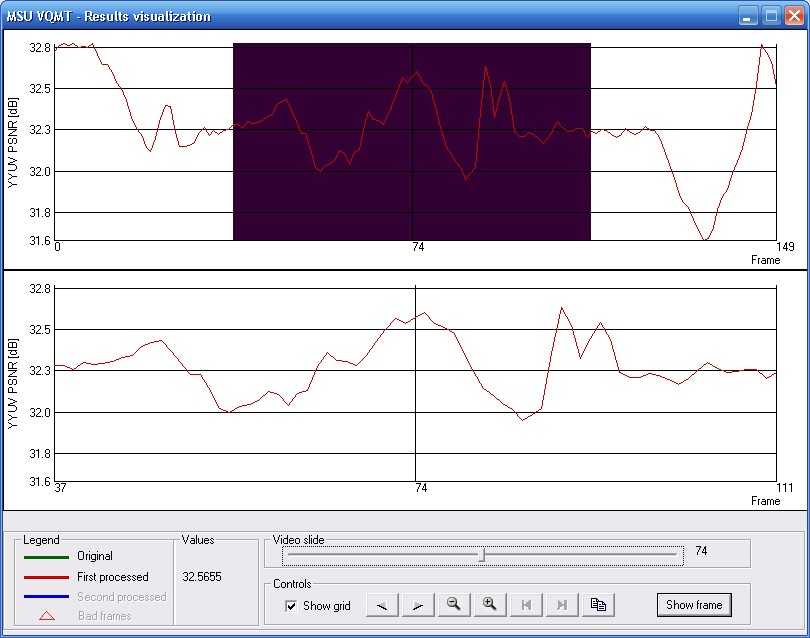

MSU Blurring Metric. Note: Red color – first image is more sharper, than second. Green color – second image is sharper than first. MSU Blocking Metric. This metric was created to measure subjective blocking effect in video sequence. For example, in contrast areas of the frame blocking is not appreciable, but in smooth areas these edges are conspicuous. This metric also contains heuristic method for detecting objects edges, which are placed to the edge of the block. In this case, metric value is pulled down, allowing to measure blocking more precisely. We use information from previous frames to achieve better accuracy. Source. MSU Blocking Metric. SSIM Index. SSIM Index is based on measuring of three components (luminance similarity, contrast similarity and structural similarity) and combining them into result value. Original paper. Original. Processed. SSIM (fast)SSIM (precise)Note: Brighter areas correspond to greater difference. There are 2 implementations of SSIM in our program: fast and precise. The fast one is equal to our previous. SSIM implementation. The difference is that the fast one uses box filter, while the precise one uses Gaussian. Notes: Fast implementation visualization seems to be shifted. This effect is caused by the sum calculation. The sum is calculated over the block to the bottom- left or up- left of the. SSIM metric has two coefficients. They depend on the maximum value of the image color component. They are. calculated using the following equations. C1 = 0. 0. 1 * 0. Max * video. 2Max. C2 = 0. 0. 3 * 0. Max * video. 2Max. Max is the maximum value of a given color component for the first video, video. Max. is the maximum value of the same color component for the second video. Maximum value of a color component. PSNR. video. Max = 2. Max = 2. 55 + 3/4 for 1. Max = 2. 55 + 6. 3/6. Max = 2. 55 + 2. 55/2. Multi. Scale SSIM INDEXMulti. Scale SSIM INDEX based on SSIM metric of several downscaled levels of original images. Result is weighted average of those metrics. Original paper. Source. Processed. MSSSIM (fast)MSSSIM (precise)Note: Brighter areas correspond to greater difference. The difference is that the fast one uses box filter, while the precise one uses Gaussian blur. Notes: Because result metric is calculated as multiplication of several metric values below 1. Fast implementation visualization seems to be shifted. This effect is caused by the sum calculation. The sum is calculated over the block to the bottom- left or up- left of the. There are 3 types of regions – edges, textures and smooth regions. Result metric calculated as weighted average of SSIM metric for those regions. In fact, human eye can see difference more precisely on textured or edge regions than on smooth regions. Division based on gradient magnitude is presented in every pixel of images. Original paper. Source. Processed. 3- SSIM regions division. SSIM metric. Note: More bright areas corresponds to greater difference. Spatio- Temporal SSIM. The idea of this algorithm is to use motion- oriented weighted windows for SSIM Index. MSU Motion Estimation algorithm is used to retrieve this information. Based on the ME results, weighting window is constructed for every pixel. This window can use up to 3. Then SSIM Index is calculated for every window to take into account temporal distortions as well. In addition, another spooling technique is used in this implementation. We use only lower 6% of metric values for the frame to calculate frame metric value. This causes larger metric values difference for difference files. Original paper. Source. Processed. Metric visualization. Note: Brighter blocks correspond to greater difference. VQMVQM uses DCT to correspond to human perception. Original paper. Note: Brighter blocks correspond to greater difference. MSEOther resources. Video resources. Bookmark this page. Add to Del. icio. Digg It. . reddit. Last updated. 2. 8- July- 2. Project updated by Server Team and. MSU Video Group. Project sponsored by YUVsoft Corp. Project supported by MSU Graphics & Media Lab. This may be difficult if the timestamps do not match (They may be modified by the RTP server). Next decode each frame into its YUV channels. You can average the three PSNR values at the end, but this puts too much weight on the U,V channels. I recommend just using the Y channel, as it is most important. And sense you are measuring packet loss, the values will be strongly correlated anyway. Next calculate your mean squared error like so: int.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2016

Categories |

RSS Feed

RSS Feed